Feature extraction in high-dimensional data

Researchers: Milana Gataric, Richard J. Samworth, Tengyao Wang

When analysing high-dimensional data, the initial task is typically to reduce its dimension while keeping the most meaningful information conveyed by its original features. This can be achieved by constructing new features from those originally collected so that a better representation of the data is obtained. Principal Component Analysis (PCA) is a classical dimensionality reduction technique that constructs new features which maximise the explained variance in the data. However, such features are linear combinations of all original ones and are often difficult to interpret in practice.

The need to reduce the dimensionality while simultaneously constructing interpretable features arises frequently across different domains in healthcare. For instance, when analysing the expression of different genes collected in a DNA microarray, it is informative to extract a subset of genes responsible for most of the variability in the collected data. Likewise, when analysing a hyperspectral image, it is important to reduce its dimension while simultaneously keeping only the richest spectral ranges.

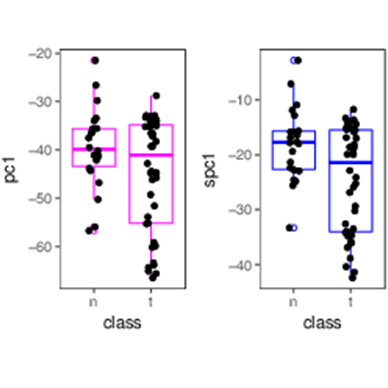

Sparse PCA is a recently introduced approach for simultaneous feature extraction and feature selection in unsupervised learning. As opposed to classical PCA, it selects only a subset of original features when constructing a new one, thereby facilitating an easier interpretation in practice. Moreover, sparse PCA reduces the number of samples required for such tasks. Based on the idea of aggregating over carefully selected random projections of data, we developed a new method for sparse PCA which is both efficient and reliable.