A-B quasiconvexity and imaging processing

Researchers: Elisa Davoli (University of Vienna), Irene Fonseca (Carnegie Mellon University), Pan Liu

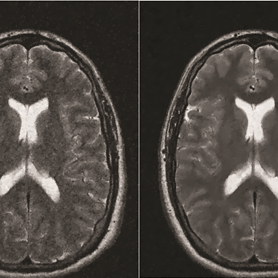

The variational formulation of problems in image processing often contains two important elements, a tuning parameter (e.g. $\alpha \in R^N$) and a regularizer (e.g. $TV$ or $TGV$). In the existing literature a regularizer is fixed a priori, and the biggest effort is concentrated on studying how to identify the best parameters and one successful way is by using the bilevel (parameter) training scheme. However, there is no evidence suggesting that $TGV$ will always perform better than $TV$, or vice versa. The main focus of this project is to investigate how to optimally tune both the parameter and the regularizer, in order to achieve the best reconstructed image. However, having only two regularizers (e.g. $TV$ and $TGV$) makes our problem less interesting. To be precise, the optimal regularizer would simply be determined by performing the parameter training scheme twice for $TV$ and $TGV$ separately, and finer texture effects, which might be provided by a regularizer between $TV$ and $TGV$ in certain sense, might be neglected in the optimization procedure.

In this project we first hope to design a new class of regularizers which is able to give a meaningful interpolation between the regularizers $TV$ and $TGV$, and also to guarantee that the collection of such spaces itself exhibits certain compactness and lower semicontinuity properties. To this purpose, we introduce a new class of regularizers, denoted by $ABQ_{A,B}^{1+s}(u)$ regularizers, where $0<s<1$, by incorporating the theory of $A-B$ (Morrey)-quasiconvex operators fractional Sobolev spaces. We shall show that the $A-B$ (Morrey) regularizer $ABQ_{A,B}^{1+s}(u)$ provides an unified approach to the existing popular regularizers. The second part of this project is aiming to design a training scheme which is able to training (Morrey)-quasiconvex operators in the new proposed $ABQ_{A,B}^{1+s}(u)$ regularizer as well as the tuning parameters, simultaneously, and prove the existence of solutions of such training scheme. We also hope to learn the relation behind the existing regularizers via studying this new regularizer.